Collect User Feedback

Monitor users' feedback to give you an indication of how your AI system is doing. Collecting feedback is crucial throughout your app's lifecycle to evaluate quality and improve the pipeline, starting from development, through prototype testing, to production.

About This Task

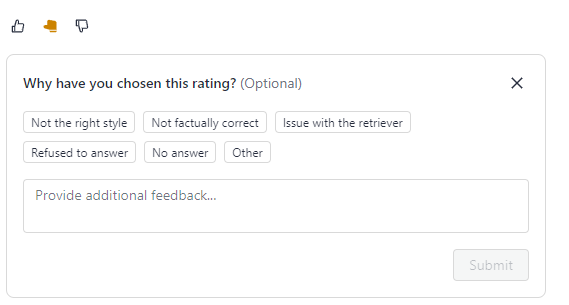

You or your users can rate each answer as accurate (thumbs up), fairly accurate (thumbs sideways), or inaccurate (thumbs down). When users select the rating, they can explain it by selecting predefined reasons called tags or by adding comments in the text field.

Admins can add feedback tags to a pipeline through the Pipeline Details page or REST API endpoints. Users can then select applicable tags when rating an answer. Tags can then help classify feedback entries:

Before moving your pipeline to production, you can share its prototype and let the users test it. For information on how to do this, see Share a Pipeline Prototype.

Setting Up a Feedback System in Production

Collect feedback from your users to monitor your pipeline's performance over time, identify potential issues (such as data drift), or use it to improve your pipeline.

To collect feedback:

-

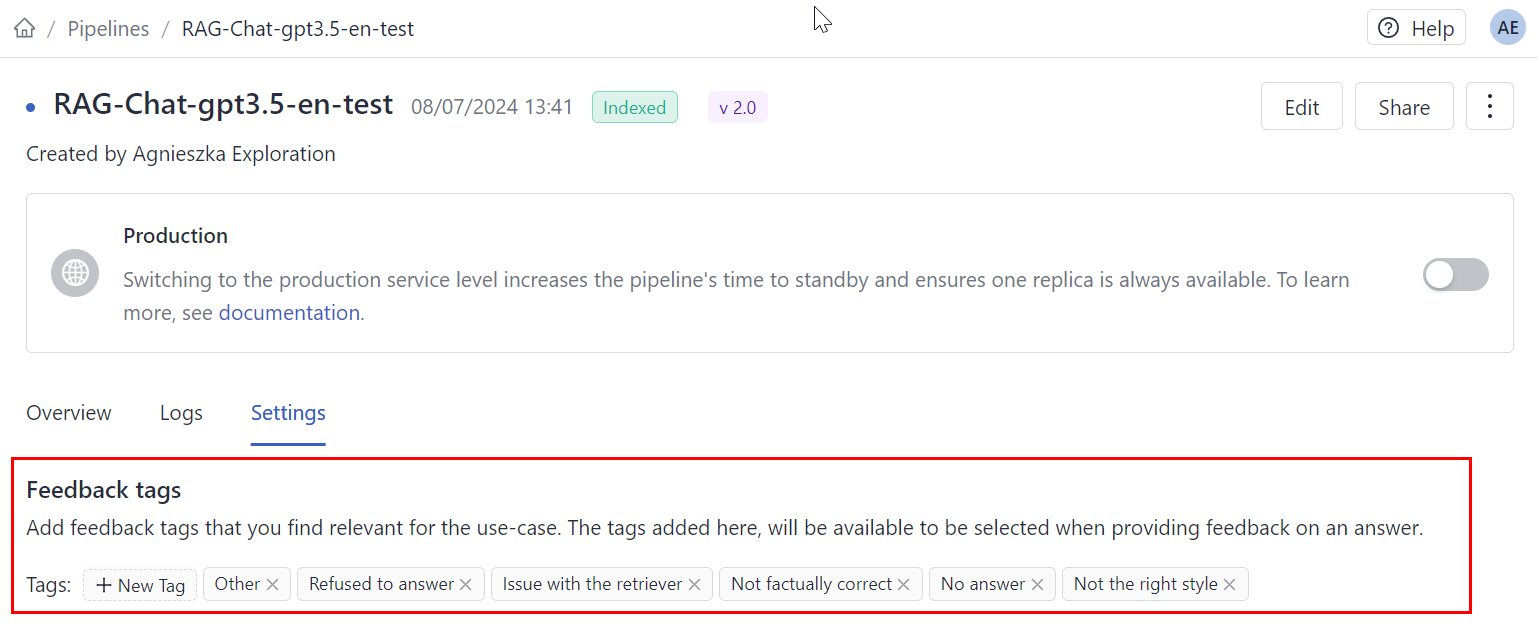

Add tags you want your users to select when assigning ratings to answers. Tags are specific to a pipeline.

To add tags in Haystack Platform:- Go to Pipelines and click the name of the pipeline you want to add tags to. This opens the Pipeline Details page.

- Go to the Settings tab and add feedback tags.

-

Add the "thumbs" buttons and a text field in your UI.

-

Send requests with the feedback to Haystack Platform's Create Feedback API endpoint.

If you're using the Haystack Platform UI, the feedback system is added to each pipeline by default. You just need to add tags to your pipeline, if needed.

Analyzing Feedback

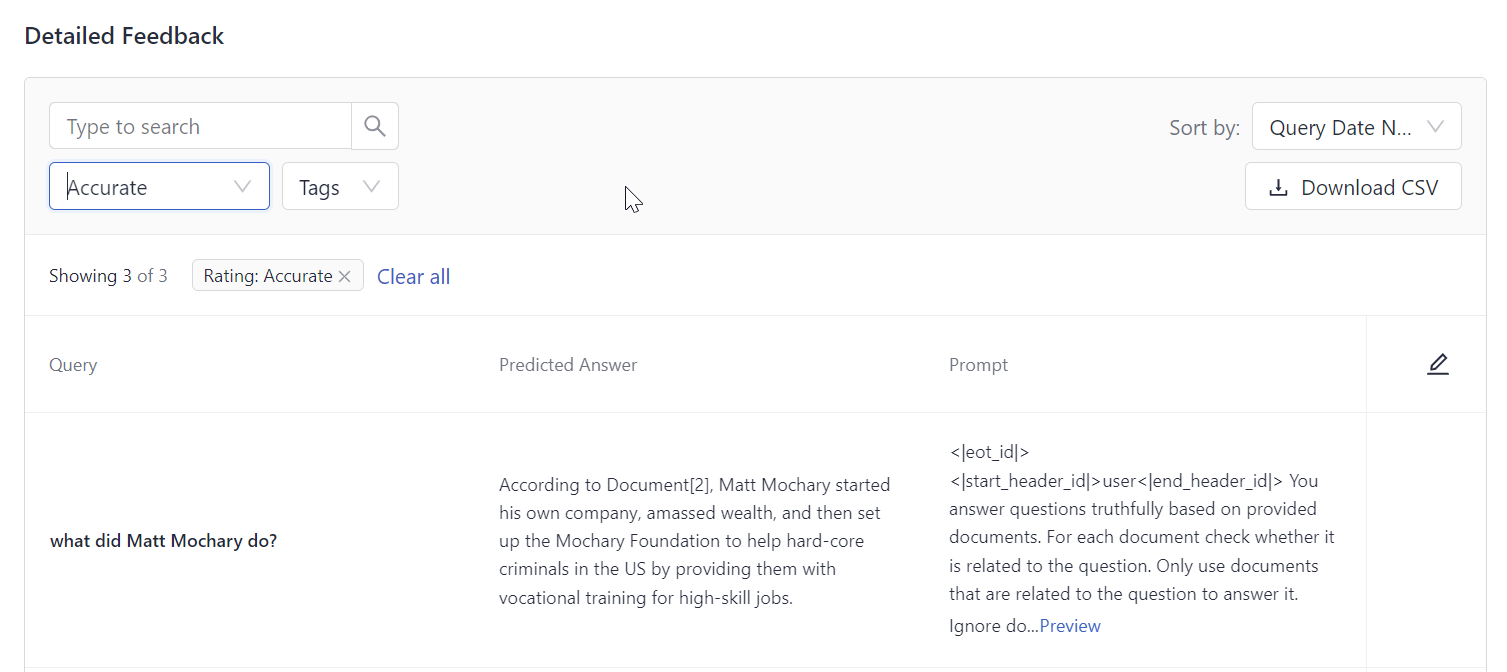

You can analyze feedback on the Pipeline Details page. Click the name of the pipeline whose feedback you want to view and scroll down to the Detailed Feedback section:

You can check the queries, answers, and feedback entries for each one and download the feedback as a CSV file. This is also possible with Get Paginated Feedback (returns detailed feedback), Get Pipeline Feedback Stats (returns feedback statistics, like the count of entries, users, and average accuracy), and Export Feedback (downloads a CSV file with all feedback).

Was this page helpful?