DeepsetNvidiaDocumentEmbedder

Embed documents using embedding models by NVIDIA Triton.

Basic Information

- Type:

deepset_cloud_custom_nodes.embedders.nvidia.document_embedder.DeepsetNvidiaDocumentEmbedder - Components it most often connects with:

- PreProcessors:

DeepsetNvidiaDocumentEmbeddercan receive documents to embed from a PreProcessor, likeDocumentSplitter. DocumentWriter:DeepsetNvidiaDocumentEmbeddercan send embedded documents toDocumentWriterthat writes them into the document store.

- PreProcessors:

Inputs

| Parameter | Type | Default | Description |

|---|---|---|---|

| documents | List[Document] | The documents to embed. |

Outputs

| Parameter | Type | Default | Description |

|---|---|---|---|

| documents | List[Document] | Documents with their embeddings added to the metadata. | |

| meta | Dict[str, Any] | Metadata on usage statistics. |

Overview

NvidiaDocumentEmbedder uses NVIDTIA Triton models to embed a list of documents. It then adds the computed embeddings to the document's embedding metadata field.

This component runs on optimized hardware in Haystack Enterprise Platform, which means it doesn't work if you export it to a local Python file. If you're planning to export, use SentenceTransformersDocumentEmbedder instead.

Embedding Models in Query Pipelines and Indexes

The embedding model you use to embed documents in your indexing pipeline must be the same as the embedding model you use to embed the query in your query pipeline.

This means the embedders for your indexing and query pipelines must match. For example, if you use CohereDocumentEmbedder to embed your documents, you should use CohereTextEmbedder with the same model to embed your queries.

Usage Example

Using the Component in an Index

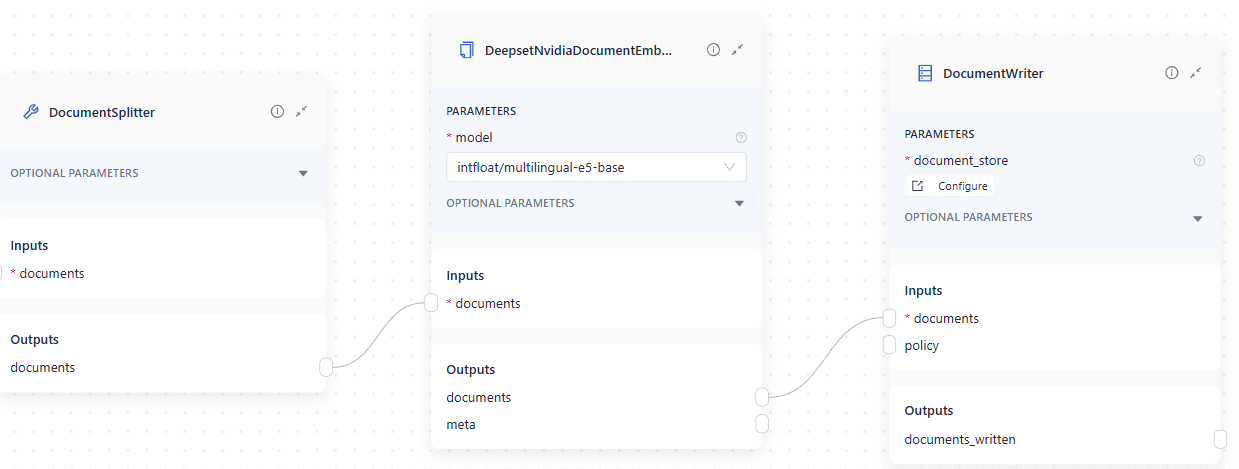

This is an example of a DeepsetNvidiaDocumentEmbedder used in an index. It receives a list of documents from DocumentSplitter and then sends the embedded documents to DocumentWriter:

Here's the YAML configuration:

components:

DocumentSplitter:

type: haystack.components.preprocessors.document_splitter.DocumentSplitter

init_parameters:

split_by: word

split_length: 200

split_overlap: 0

split_threshold: 0

splitting_function: null

DeepsetNvidiaDocumentEmbedder:

type: deepset_cloud_custom_nodes.embedders.nvidia.document_embedder.DeepsetNvidiaDocumentEmbedder

init_parameters:

model: intfloat/multilingual-e5-base

prefix: ''

suffix: ''

batch_size: 32

meta_fields_to_embed: null

embedding_separator: \n

truncate: null

normalize_embeddings: true

timeout: null

backend_kwargs: null

DocumentWriter:

type: haystack.components.writers.document_writer.DocumentWriter

init_parameters:

document_store:

type: haystack_integrations.document_stores.opensearch.document_store.OpenSearchDocumentStore

init_parameters:

embedding_dim: 1024

similarity: cosine

policy: NONE

connections:

- sender: DocumentSplitter.documents

receiver: DeepsetNvidiaDocumentEmbedder.documents

- sender: DeepsetNvidiaDocumentEmbedder.documents

receiver: DocumentWriter.documents

max_runs_per_component: 100

metadata: {}

Parameters

Init Parameters

These are the parameters you can configure in Pipeline Builder:

| Parameter | Type | Default | Description |

|---|---|---|---|

| model | DeepsetNVIDIAEmbeddingModels | DeepsetNVIDIAEmbeddingModels.INTFLOAT_MULTILINGUAL_E5_BASE | The model to use for calculating embeddings. Can be a specific model path like intfloat/multilingual-e5-base. |

| Choose the model from the list. | |||

| prefix | str | A string to add at the beginning of each document text. Can be used to prepend the text with an instruction, as required by some embedding models, such as E5 and bge. | |

| suffix | str | A string to add at the end of each document text. | |

| batch_size | int | 32 | The number of documents to embed at once. |

| meta_fields_to_embed | List[str] | None | None |

| embedding_separator | str | \n | Separator used to concatenate the meta fields to the document text. |

| truncate | EmbeddingTruncateMode | None | None |

| normalize_embeddings | bool | True | Whether to normalize the embeddings. Normalization is done by dividing the embedding by its L2 norm. |

| timeout | float | None | None |

| backend_kwargs | Dict[str, Any] | None | None |

Run Method Parameters

These are the parameters you can configure for the component's run() method. This means you can pass these parameters at query time through the API, in Playground, or when running a job. For details, see Modify Pipeline Parameters at Query Time.

| Parameter | Type | Default | Description |

|---|---|---|---|

| documents | List[Document] | Documents to embed. |

Was this page helpful?