Advanced Component Connections

Learn how to connect pipeline components in cases that go beyond simple matching. This includes handling incompatible connections or setting up complex routing.

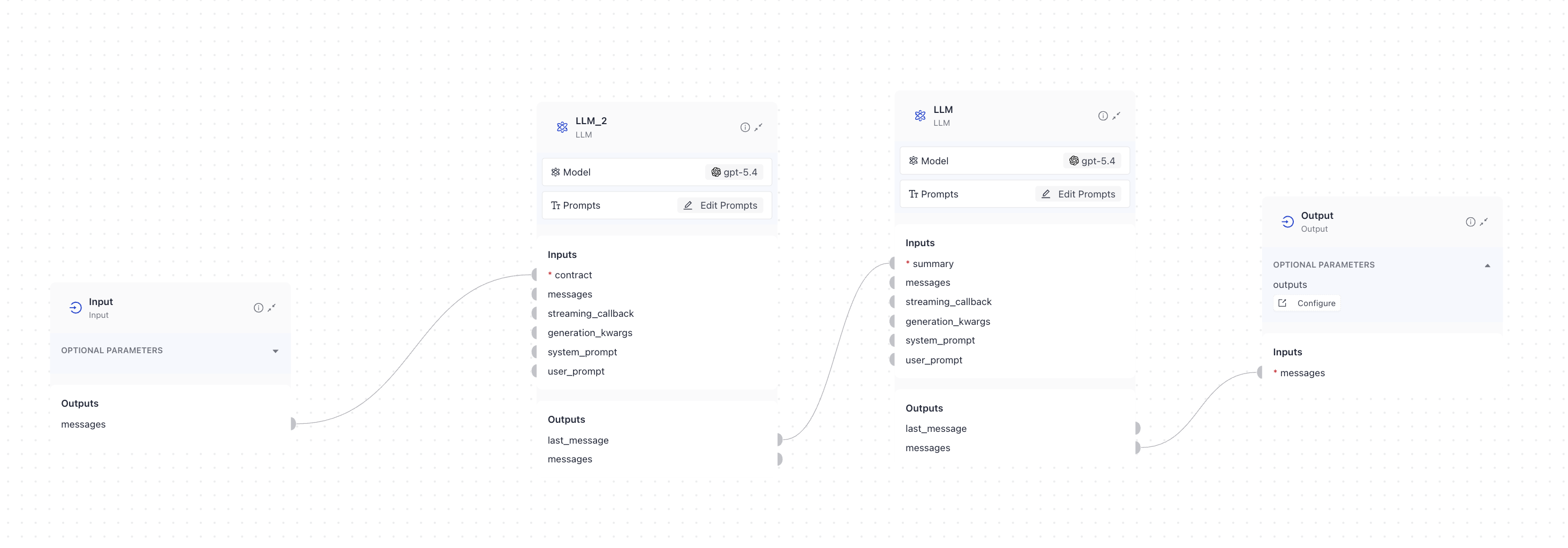

Connecting Two LLMs

You can connect two LLMs directly to each other. This is useful when you want to use the output of one LLM as the input for another LLM. Make sure there is a placeholder in the prompt template of the second LLM for the output of the first LLM.

Let's say you're building a pipeline that summarizes and simplifies complex contracts, such as bank loan contracts. The pipeline could include these steps:

- Summarize the contract: The first LLM summarizes the contract.

- Simplify the language: The second LLM simplifies the summarized contract.

The pipelines configuration could look like this:

- The

Inputcomponent receives the contract text and sends it to the firstLLMcomponent. - The first

LLMcomponent generates a summary of the contract and sends it to the secondLLMcomponent. - The second

LLMcomponent receives the summary and simplifies the language. - The

Outputcomponent receives the simplified contract and outputs it.

Example Pipeline Flow

Here is the pipeline:

YAML configuration

components:

LLM:

type: haystack.components.generators.chat.llm.LLM

init_parameters:

chat_generator:

init_parameters:

model: gpt-5.4

type: haystack.components.generators.chat.openai_responses.OpenAIResponsesChatGenerator

system_prompt: ""

user_prompt: >-

{% message role="user" %}

You are an expert at explaining complex topics to children. Your task is

to rewrite this contract summary so that a 6th grader (11-12 years old)

can understand it. Here is the contract summary:

{{ summary[0] }}

Follow these rules when simplifying the summary:

1. LANGUAGE RULES

- Use simple words that a 6th grader knows

- Keep sentences short (15 words or less)

- Use active voice instead of passive voice

- Replace legal terms with everyday words

- Define any complex words you must use

- Use "you" and "they" instead of formal names when possible

2. EXPLANATION STYLE

- Start each section with "This part is about..."

- Use real-life examples that kids understand

- Compare complex ideas to everyday situations

- Break long explanations into smaller chunks

- Use bullet points for lists

- Add helpful "Think of it like..." comparisons

3. ORGANIZATION

- Group similar ideas together

- Use friendly headers like "Money Stuff" instead of "Financial Terms"

- Put the most important information first

- Use numbered lists for steps or sequences

- Add transition words (first, next, finally)

4. MAKE IT RELATABLE

- Compare contract parts to things kids know:

* Compare deadlines to homework due dates

* Compare payments to allowance

* Compare obligations to classroom rules

* Compare penalties to time-outs

* Compare termination to quitting a sports team

5. REQUIRED SECTIONS

Simplify each of these areas:

- Who is involved (like players on a team)

- What each person must do (like chores)

- Money and payments (like saving allowance)

- Important dates (like calendar events)

- Rules everyone must follow (like game rules)

- What happens if someone breaks the rules

- How to end the agreement

- How to solve disagreements

6. FORMAT YOUR ANSWER LIKE THIS:

"Hi! I'm going to explain this contract in a way that's easy to understand. Think of a contract like a written promise between people or companies.

[Then break down each section with simple explanations and examples]

Remember: Even though we're making this simple, all parts of the original summary must be included - just explained in a kid-friendly way!"

IMPORTANT REMINDERS:

- Don't leave out any important information just because it's complex

- Double-check that your explanations are accurate

- Keep a friendly, encouraging tone

- Use emoji or simple symbols to mark important points (⭐, 📅, 💰, ⚠️)

- Break up long paragraphs

- End with a simple summary of the most important points

Your goal is to make the contract summary so clear that a 6th grader could explain it to their friend. Imagine you're the cool teacher who makes complicated stuff fun and easy to understand!

{% endmessage %}

required_variables: "*"

streaming_callback:

LLM_2:

type: haystack.components.generators.chat.llm.LLM

init_parameters:

chat_generator:

init_parameters:

model: gpt-5.4

type: haystack.components.generators.chat.openai_responses.OpenAIResponsesChatGenerator

system_prompt: ""

user_prompt: >-

{% message role="user" %}

You are a legal document analyzer specializing in contract

summarization. Your task is to analyze and summarize the following

contract comprehensively.

Contract to analyze: {{ contract }}

Provide a structured summary that includes ALL of the following components:

1. BASIC INFORMATION

- Contract type

- Parties involved (full legal names)

- Effective date

- Duration/term of contract

- Governing law/jurisdiction

2. KEY OBLIGATIONS

- List all major responsibilities for each party

- Highlight any conditional obligations

- Note any performance metrics or standards

- Identify delivery or service deadlines

- Specify any reporting requirements

3. FINANCIAL TERMS

- Payment amounts and schedules

- Currency specifications

- Payment methods

- Late payment penalties

- Price adjustment mechanisms

- Any additional fees or charges

4. CRITICAL DATES & DEADLINES

- Contract start date

- Contract end date

- Key milestone dates

- Notice period requirements

- Renewal deadlines

- Termination notice periods

5. TERMINATION & AMENDMENTS

- Termination conditions

- Early termination rights

- Amendment procedures

- Notice requirements

- Consequences of termination

6. WARRANTIES & REPRESENTATIONS

- List all warranties provided

- Duration of warranties

- Warranty limitations

- Representations made by each party

- Disclaimer provisions

7. LIABILITY & INDEMNIFICATION

- Liability limitations

- Indemnification obligations

- Insurance requirements

- Force majeure provisions

- Exclusions and exceptions

8. CONFIDENTIALITY & IP

- Scope of confidential information

- Duration of confidentiality

- Intellectual property rights

- Usage restrictions

- Return/destruction requirements

9. DISPUTE RESOLUTION

- Resolution process

- Arbitration requirements

- Mediation procedures

- Jurisdiction

- Governing law

10. SPECIAL PROVISIONS

- Any unique or unusual terms

- Special conditions

- Specific industry requirements

- Regulatory compliance requirements

- Any attachments or referenced documents

Format your response as follows:

- Use clear headings for each section

- Use bullet points for individual items

- Bold any critical terms, dates, or amounts

- Include section references from the original contract

- Note if any standard section appears to be missing from the contract

IMPORTANT GUIDELINES:

- If any of the above sections are not present in the contract, explicitly note their absence

- Flag any unusual or potentially problematic clauses

- Highlight any ambiguous terms that might need clarification

- Include exact quotes for crucial legal language

- Note any apparent gaps or inconsistencies in the contract

- Identify any terms that appear to deviate from standard industry practice

The summary must be comprehensive enough to serve as a quick reference for all material aspects of the contract while highlighting any areas requiring special attention or clarification.

{% endmessage %}

required_variables: "*"

streaming_callback:

connections:

- sender: LLM_2.last_message

receiver: LLM.summary

max_runs_per_component: 100

metadata: {}

inputs:

messages:

- LLM_2.contract

outputs:

messages: LLM.messages

Merging Multiple Outputs

With smart connections, components can accept connections from multiple lists of the same type. This means you no longer need DocumentJoiner or ListJoiner in most cases. For step-by-step instructions, see Simplify Your Pipelines with Smart Connections.

If you still need to merge outputs in a specific way, you can use a component from the Joiners group. Options include AnswerJoiner, DocumentJoiner, BranchJoiner, and StringJoiner.

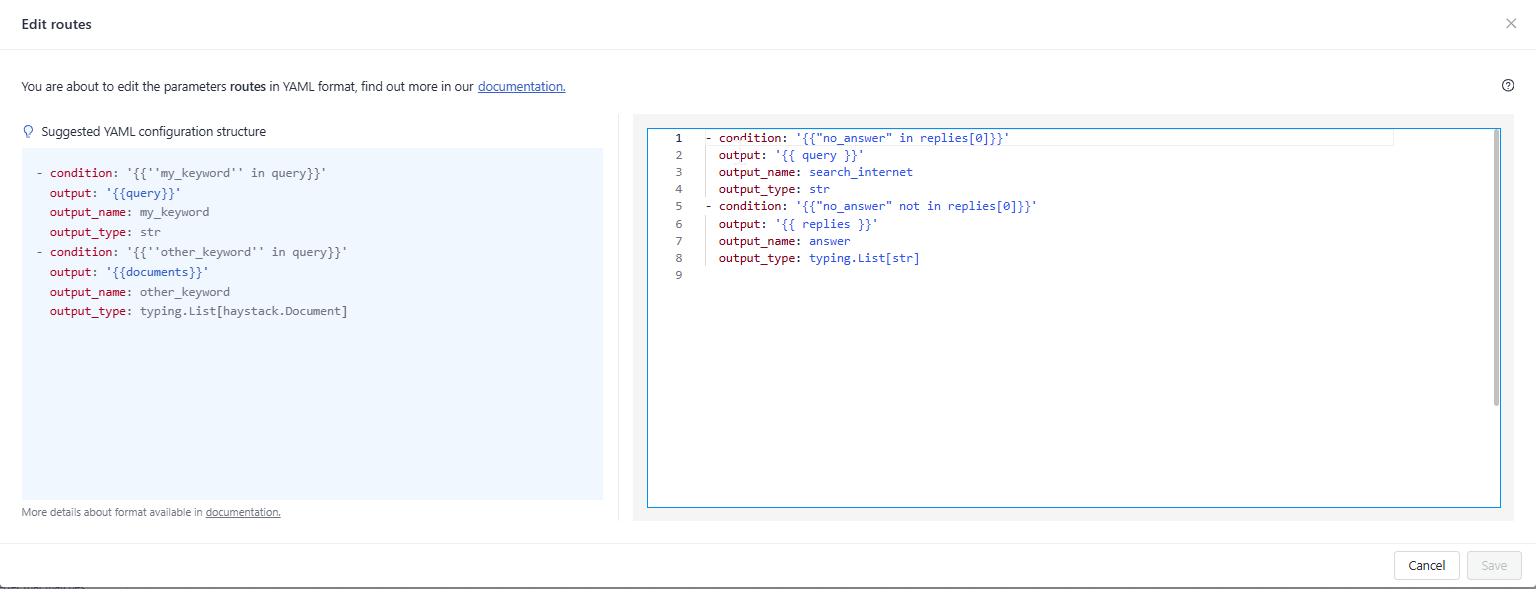

Routing Data Based on a Condition

You can route a component’s output to different branches in your pipeline based on specific conditions. The ConditionalRouter component handles this by using rules that you define.

The conditions for routing are specified in the ConditionalRouter’s routes parameter. Each route includes the following elements:

condition: A Jinja2 expression that determines if the route should be used.output: A Jinja2 expression that defines the data passed to the route.output_name: The name of the route output, used to connect to other components.output_type: The data type of the route output.

Imagine a pipeline that:

- Uses an LLM to answer user queries based on provided documents.

- Falls back to a web search if the LLM cannot find an answer.

You would need two routes:

- One for queries the LLM cannot answer (

no_answeris present). - One for queries the LLM can answer based on the documents.

This is how you could configure the routes:

- condition: '{{"no_answer" in replies[0]}}'

output: '{{ query }}'

output_name: search_internet

output_type: str

- condition: '{{"no_answer" not in replies[0]}}'

output: '{{ replies }}'

output_name: answer

output_type: typing.List[str]

The first route is used if no_answer appears in the LLM’s replies. This route outputs the query and is labeled search_internet. The second route is used if no_answer is not present. This route outputs the LLM’s replies and is labeled answer.

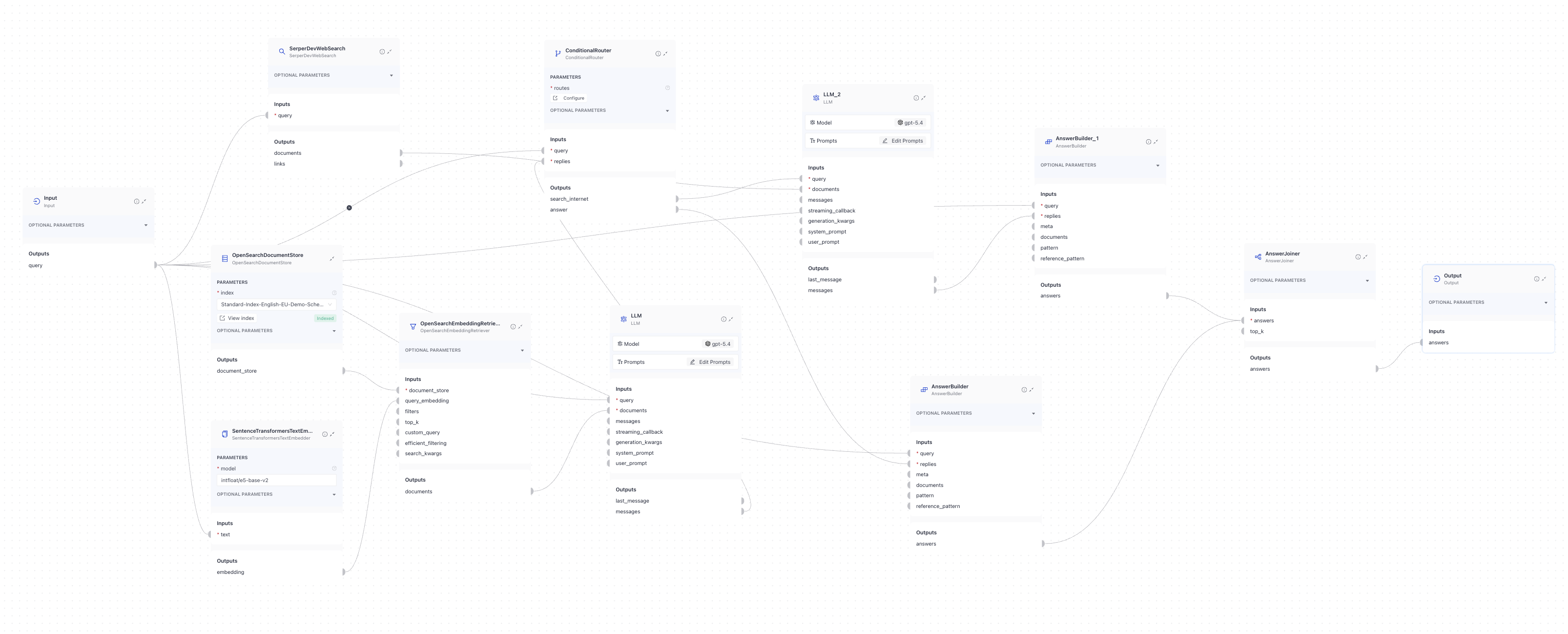

In this setup:

- An

LLMis configured to reply withno_answerif it cannot find an answer based on the documents. - The

ConditionalRouterchecks the LLM's reply and decides:- If the reply contains

no_answer, the query is sent to theSerperDevWebSearchcomponent to search the internet for an answer. - If

no_answeris not present, the reply is sent to theAnswerBuilderto format and output the LLM's response.

- If the reply contains

This is what this part of the pipeline could look like:

YAML configuration

components:

SerperDevWebSearch:

# to use this component, you need a SerperDev API key

type: haystack.components.websearch.serper_dev.SerperDevWebSearch

init_parameters:

api_key:

type: env_var

env_vars:

- serper_dev

strict: false

top_k: 5

allowed_domains:

search_params:

ConditionalRouter:

type: haystack.components.routers.conditional_router.ConditionalRouter

init_parameters:

routes:

- condition: '{{"no_answer" in replies[0]}}'

output: '{{ query }}'

output_name: search_internet

output_type: str

- condition: '{{"no_answer" not in replies[0]}}'

output: '{{ replies }}'

output_name: answer

output_type: typing.List[str]

custom_filters: {}

unsafe: false

validate_output_type: false

OpenSearchEmbeddingRetriever:

type: haystack_integrations.components.retrievers.opensearch.embedding_retriever.OpenSearchEmbeddingRetriever

init_parameters:

document_store:

type: haystack_integrations.document_stores.opensearch.document_store.OpenSearchDocumentStore

init_parameters:

use_ssl: true

verify_certs: false

hosts:

- ${OPENSEARCH_HOST}

http_auth:

- ${OPENSEARCH_USER}

- ${OPENSEARCH_PASSWORD}

embedding_dim: 768

similarity: cosine

index: Standard-Index-English-EU-Demo-Schengen

max_chunk_bytes: 104857600

return_embedding: false

method:

mappings:

settings:

index.knn: true

create_index: true

timeout:

filters:

top_k: 10

filter_policy: replace

custom_query:

raise_on_failure: true

efficient_filtering: false

AnswerJoiner:

type: haystack.components.joiners.answer_joiner.AnswerJoiner

init_parameters:

join_mode: concatenate

top_k:

sort_by_score: false

AnswerBuilder:

type: haystack.components.builders.answer_builder.AnswerBuilder

init_parameters:

pattern:

reference_pattern:

AnswerBuilder_1:

type: haystack.components.builders.answer_builder.AnswerBuilder

init_parameters:

pattern:

reference_pattern:

SentenceTransformersTextEmbedder:

type: haystack.components.embedders.sentence_transformers_text_embedder.SentenceTransformersTextEmbedder

init_parameters:

model: intfloat/e5-base-v2

device:

token:

prefix: ''

suffix: ''

batch_size: 32

progress_bar: true

normalize_embeddings: false

trust_remote_code: false

truncate_dim:

model_kwargs:

tokenizer_kwargs:

config_kwargs:

precision: float32

LLM:

type: haystack.components.generators.chat.llm.LLM

init_parameters:

chat_generator:

init_parameters:

model: gpt-5.4

type: haystack.components.generators.chat.openai_responses.OpenAIResponsesChatGenerator

system_prompt: ""

user_prompt: >-

{% message role="user" %}

Answer the following query given the documents.

If the answer is not contained within the documents reply with

'no_answer'

Query: {{query}}

Documents:

{% for document in documents %}

{{document.content}}

{% endfor %}

{% endmessage %}

required_variables: "*"

streaming_callback:

LLM_2:

type: haystack.components.generators.chat.llm.LLM

init_parameters:

chat_generator:

init_parameters:

model: gpt-5.4

type: haystack.components.generators.chat.openai_responses.OpenAIResponsesChatGenerator

system_prompt: ""

user_prompt: >-

{% message role="user" %}

Answer the following query given the documents retrieved from the web.

Your answer shoud indicate that your answer was generated from

websearch.

Query: {{query}}

Documents:

{% for document in documents %}

{{document.content}}

{% endfor %}

{% endmessage %}

required_variables: "*"

streaming_callback:

connections:

- sender: AnswerBuilder.answers

receiver: AnswerJoiner.answers

- sender: AnswerBuilder_1.answers

receiver: AnswerJoiner.answers

- sender: SentenceTransformersTextEmbedder.embedding

receiver: OpenSearchEmbeddingRetriever.query_embedding

- sender: OpenSearchEmbeddingRetriever.documents

receiver: LLM.documents

- sender: LLM.messages

receiver: ConditionalRouter.replies

- sender: SerperDevWebSearch.documents

receiver: LLM_2.documents

- sender: ConditionalRouter.search_internet

receiver: LLM_2.query

- sender: LLM_2.messages

receiver: AnswerBuilder_1.replies

- sender: ConditionalRouter.answer

receiver: AnswerBuilder.replies

max_runs_per_component: 100

metadata: {}

inputs:

query:

- ConditionalRouter.query

- AnswerBuilder.query

- AnswerBuilder_1.query

- SerperDevWebSearch.query

- SentenceTransformersTextEmbedder.text

- LLM.query

outputs:

answers: AnswerJoiner.answers

Was this page helpful?