DeepsetNvidiaNIMDocumentEmbedder

Embeds documents using NVIDIA NIM models running on hardware optimized by deepset.

Embedding Models in Query Pipelines and Indexes

The embedding model you use to embed documents in your indexing pipeline must be the same as the embedding model you use to embed the query in your query pipeline.

This means the embedders for your indexing and query pipelines must match. For example, if you use CohereDocumentEmbedder to embed your documents, you should use CohereTextEmbedder with the same model to embed your queries.

Key Features

- Embeds documents using NVIDIA NIM models on deepset-optimized hardware.

- Models are pre-loaded — no download at query time.

- Stores computed embeddings in each document's

embeddingfield. - Configurable batch size and metadata field embedding.

- Supports embedding normalization.

- Available only on deepset AI Platform.

Configuration

- Drag the

DeepsetNvidiaNIMDocumentEmbeddercomponent onto the canvas from the Component Library. - Click the component to open the configuration panel.

- On the General tab:

- Select the embedding model from the list on the component card, such as

nvidia/nv-embedqa-e5-v5.

- Select the embedding model from the list on the component card, such as

- Go to the Advanced tab to configure prefix and suffix text, batch size, metadata fields to embed, embedding separator, truncation mode, normalization, and timeout.

Connections

DeepsetNvidiaNIMDocumentEmbedder receives a list of documents as input and outputs those documents with embeddings added to their embedding field, along with a meta dictionary with usage statistics.

Connect a preprocessor (such as DocumentSplitter) or joiner to its documents input. Connect its documents output to DocumentWriter to store the embedded documents.

Usage Example

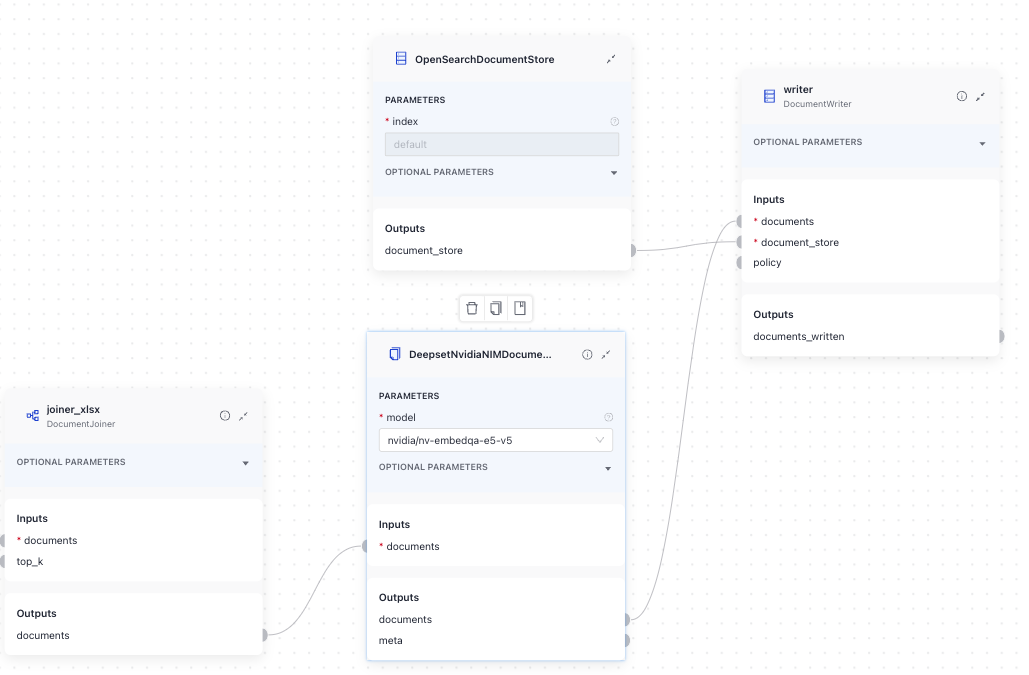

This is an example of a DeepsetNvidiaNIMDocumentEmbedder used in an index. It receives a list of documents from DocumentJoiner and sends the embedded documents to DocumentWriter:

Here's the YAML configuration:

components:

joiner_xlsx: # merge split documents with non-split xlsx documents

type: haystack.components.joiners.document_joiner.DocumentJoiner

init_parameters:

join_mode: concatenate

sort_by_score: false

DeepsetNvidiaNIMDocumentEmbedder:

type: deepset_cloud_custom_nodes.embedders.nvidia.nim_document_embedder.DeepsetNvidiaNIMDocumentEmbedder

init_parameters:

model: nvidia/nv-embedqa-e5-v5

prefix: ''

suffix: ''

batch_size: 32

meta_fields_to_embed:

embedding_separator: \n

truncate:

normalize_embeddings: true

timeout:

backend_kwargs:

writer:

type: haystack.components.writers.document_writer.DocumentWriter

init_parameters:

document_store:

type: haystack_integrations.document_stores.opensearch.document_store.OpenSearchDocumentStore

init_parameters:

hosts:

index: default

max_chunk_bytes: 104857600

embedding_dim: 768

return_embedding: false

method:

mappings:

settings:

create_index: true

http_auth:

use_ssl:

verify_certs:

timeout:

policy: OVERWRITE

connections:

- sender: joiner.documents

receiver: DeepsetNvidiaDocumentEmbedder.documents

- sender: DeepsetNvidiaDocumentEmbedder.documents

receiver: writer.documents

Parameters

Inputs

| Parameter | Type | Default | Description |

|---|---|---|---|

| documents | List[Document] | Documents to embed. |

Outputs

| Parameter | Type | Default | Description |

|---|---|---|---|

| documents | List[Document] | Documents with their embeddings added to the metadata. | |

| meta | Dict[str, Any] | Metadata regarding the usage statistics. |

Init Parameters

These are the parameters you can configure in Pipeline Builder:

| Parameter | Type | Default | Description |

|---|---|---|---|

| model | DeepsetNvidiaNIMEmbeddingModels | DeepsetNvidiaNIMEmbeddingModels.NVIDIA_NV_EMBEDQA_E5_V5 | The model to use for calculating embeddings. Choose the model from the list. |

| prefix | str | A string to add at the beginning of each document text. Can be used to prepend the text with an instruction, as required by some embedding models, such as E5 and bge. | |

| suffix | str | A string to add at the end of each document text. | |

| batch_size | int | 32 | The number of documents to embed at once. |

| meta_fields_to_embed | List[str] | None | None | List of meta fields that should be embedded along with the document text. |

| embedding_separator | str | \n | Separator used to concatenate the meta fields to the document text. |

| truncate | EmbeddingTruncateMode | None | None | Specifies how to truncate inputs longer than the maximum token length. Possible options are: START, END, NONE. If set to START, the input is truncated from the start. If set to END, the input is truncated from the end. If set to NONE, returns an error if the input is too long. |

| normalize_embeddings | bool | True | Whether to normalize the embeddings. Normalization is done by dividing the embedding by its L2 norm. |

| timeout | float | None | None | Timeout for request calls in seconds. |

| backend_kwargs | Dict[str, Any] | None | None | Keyword arguments to further customize the model behavior. |

Run Method Parameters

These are the parameters you can configure for the component's run() method. This means you can pass these parameters at query time through the API, in Playground, or when running a job. For details, see Modify Pipeline Parameters at Query Time.

| Parameter | Type | Default | Description |

|---|---|---|---|

| documents | List[Document] | Documents to embed. |

Was this page helpful?